ADVERTISEMENT

Filtered By: Scitech

SciTech

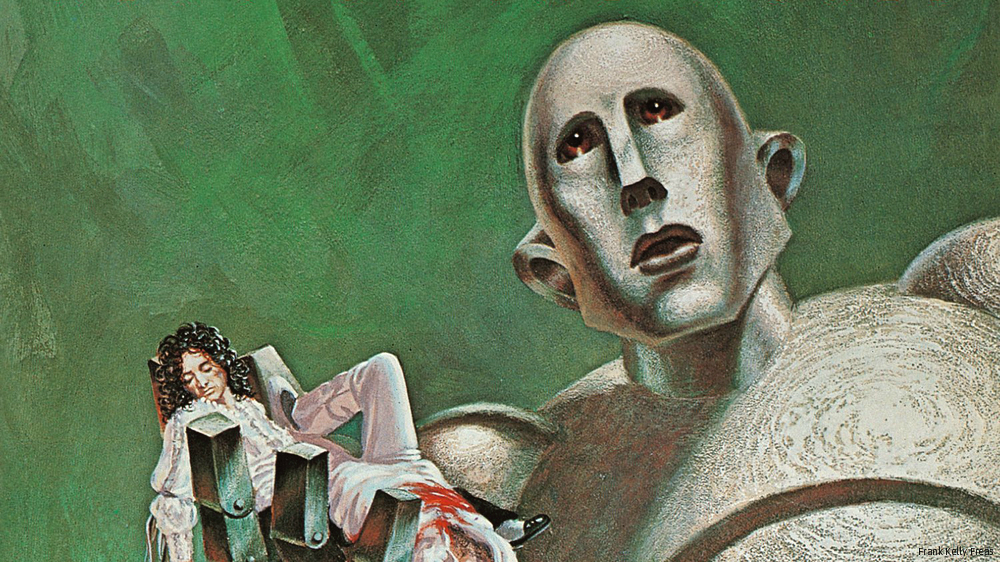

Putting the ethics in robotics: Testing Asimov's First Law

By BEA MONTENEGRO

+

Make this your preferred source to get more updates from this publisher on Google.

Are you willing to place your life in the hands of a robot? In a future where robots could possibly be used in almost all aspects of our everyday lives, you may not have a choice.

Renowned science fiction author, Isaac Asimov outlined a set of rules called the the Three Laws of Robotics. The rules served as part of the programming of almost all the robots featured in his futuristic works.

First introduced in the short story "Runaround" in 1942, the rules—later expanded into four laws in subsequent works—are:

0. A robot may not harm humanity, or, by inaction, allow humanity to come to harm.1. A robot may not injure a human being or, through inaction, allow a human being to come to harm.2. A robot must obey the orders given to it by human beings, except where such orders would conflict with the First Law.3. A robot must protect its own existence as long as such protection does not conflict with the First or Second Law.

Alan Winfield, a roboticist from the Bristol Robotics Laboratory, conducted an experiment where he and his colleagues tested a simplified version of the first rule, using another robot as a stand-in for a human being.

Presented at the Towards Autonomous Robotic Systems meeting held in Birmingham, the experiment seemed like a success. However, when the scientists introduced two "humans" at the same time, problems arose. In some scenarios the robot was only able to save one human. In others, it spent too much time trying to decide and in the end was unable to save either one.

According to Winfield, his robot is forced to behave according to its programming, despite the fact that it does not understand the reasoning behind it. The question now is: Can robots make ethical decisions?

Military combat robots may provide the first few steps towards finding an answer.

Scientist Ronald Arkin from the Georgia Institute of Technology has devised a set of algorithms which allow robots make decisions on the battlefield. During test runs, robots with this programming sometimes choose to minimize casualties when in areas like schools or hospitals.

According to Wendell Wallach, the author of "Moral Machines: Teaching robots right from wrong," studies like those conducted by Winfield can be the basis of future, more complex robotics programming. "If we can get them to function well in environments when we don't know exactly all the circumstances they'll encounter, that's going to open up vast new applications for their use." — TJD, GMA News

More Videos

Most Popular